You will have a quadratic equation with two variable 'a' and 'b' and you can plot the quadratic equation as shown below. If you have a real data value for x(n) and y(n) and plug all those values into the equation shown above. Just don't get scared and try on your own. It would look scary, but it can be done by high school math. If you plug in each error equation into the equation above, you would get the following equation. Now I think we all understand why we need to use 'Squared Sum of Errors' as follows. But this absolute equation is hard to analyze mathematically (especially with Calculus). It can solve the problem that a negative error is canceled away with a positive error. This would resolve the issue that is mentioned above.

Then you may ask why not using following equation.

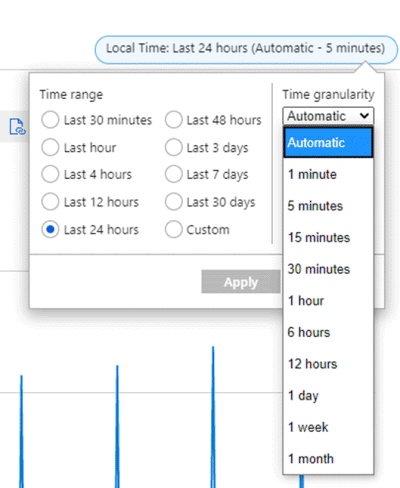

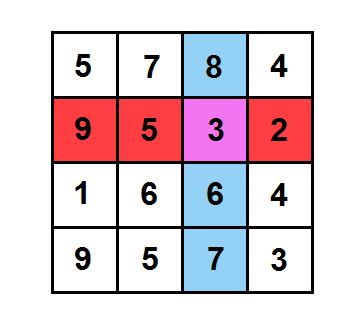

+ e2 = 100 + (-100) = 0) that may give you the impression that there is no error at all. But if you sum up these two errors as they are, it gives you the result of '0' (e1 It is pretty big error and the only difference is the direction of the error and only sign of th evalue is different. For example, let's assume that you have error is 100 and another error = -100. This cannot be used because in some case just summing up the errors would give you completely wrong information. Why not simply adding up all the errors as follows without squaring. That's how the term 'Least Square' came from).Īt this point, you may ask why we need to get all the error squared. (As you see, this is to find the least point of sum of squared error. Once we have all these error equation, the goal is to find 'a' and 'b' that satisfy the following condition. Equation of error for each data point obtained by this method are as follows, The red point is the estimated point (the ideal data points when we assumed that the regression line is y = a x + b) and the blue point is the data that are given to us.Įrror for each data point is the difference between the red point and blue point. The error for each data is defined as shown below. Iv) Find the value of 'a' and 'b' that gives you the minimum of the quadratic function.Īs a first step, let's look at how 'error' for each data point are defined. One is 'Calculus' based and the other one is Matrix (linear algebra) based method. There are largely two kinds of methods that are commonly used for this problem. But I suggest you to do random trial at least several times and then you will understand why we need some kind of mathematical approach and will be highly motivated to follow the mathematical procedure all the way to the end even though sometimes it would be hard and sometimes (actually most of times) it become very boring.

Of course, this is not the one I would recommend. The first idea you can think of is just try random values and keep doing 'try and error'. Now the question become 'How can you figure out the value for 'a' and 'b' to make the line best fit for the given data set ?'. You can change the location and the slope of the line (blue line in the following plot) by applying various different value for 'a' and 'b'. Is a function from junior highschool math). I assume that everybody would understand the linear function can be expressed as y = a x + b (this Let's assume that somebody gave you the following 5 data points in the form of (x(n),y(n)) which can be plotted as shown below and he ask you to find a single straight line (a linear function) that can best represent (best fit) the whole data set. Let's take more practical (intuitive approach). 'Least Square' is a special type of technique for data regression based on 'Sum of square of error between the given data and the estimated function(regression equation).ĭon't worry if this does not make sense to you.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed